Case Study

Introduction

Our company runs a network of large content, ad-supported websites. Before we discovered IPBlock, we had three main problems with no clear solution.

Firstly, when we examined our logs, we saw that about half of our traffic came from sources that either did not benefit us or were just clearly malicious. Those sources included various site scrapers, research or marketing crawlers, exploits, password attacks, and many other similar varieties.

Secondly, we would encounter sporadic DDoS attacks. Several of the attacks were prolonged, while most were probing in nature and would last from several minutes to an hour. These attacks sometimes caused service interruptions and significantly increased our hosting costs due to bandwidth overages and the need to maintain excessive infrastructure capacity.

Finally, our ad partners complained about a noticeable portion of our traffic coming from robotic sources. This reduced the perceived quality of our sites and, in turn, the advertising rates we would receive. Though we could trace most of this robotic traffic to public clouds such as Google or AWS, as well as other data centers, we needed a more straightforward method of detecting a significant portion of it due to its variety and sophisticated methods of obfuscation.

We needed a solution that would address these three issues in a simple, manageable, and transparent way.

Our infrastructure

We use our own CDN that runs on 15 Windows servers collocated around the World. In addition, there are several other servers that we use for “command and control”: back-office processing, analytics, and exchange services. We have a large global audience that accesses our services primarily via browsers, mobile apps, and API.

Our servers are open to the World as:

- Websites on ports 80 and 443

- Public API / mobile app ports

- Email server ports

- Internal / back-office ports

- Admin ports

How we solved our bandwidth and DDoS problems with IPBlock

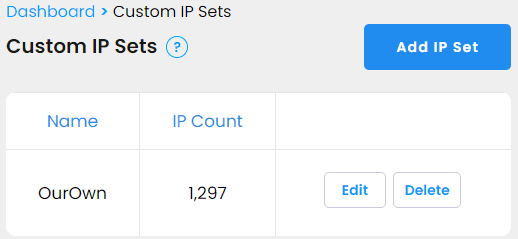

First, as recommended by IPBlock, we created a custom IP set of all our own IPs for whitelisting on all our rules.

The rules themselves vary depending on the port purpose.

Website, public API, mobile app ports

We created two combinations of IP multisets and rules for public ports that receive most of the traffic.

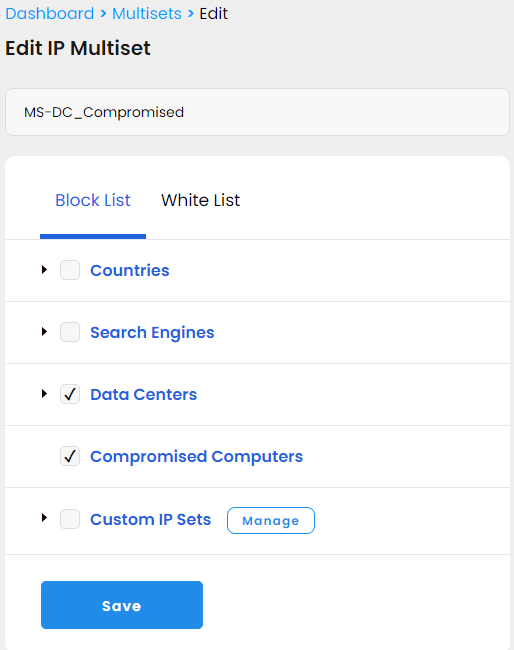

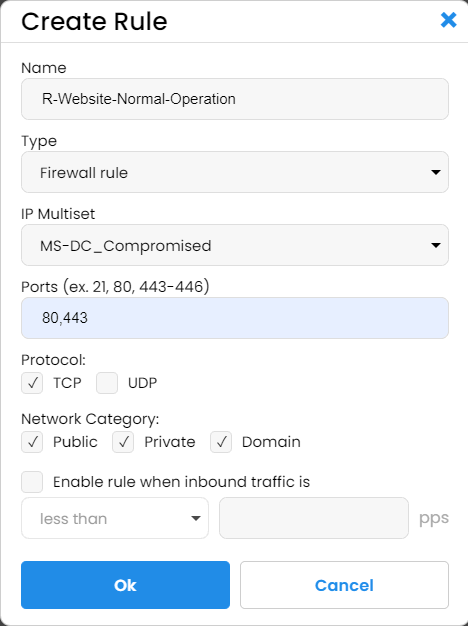

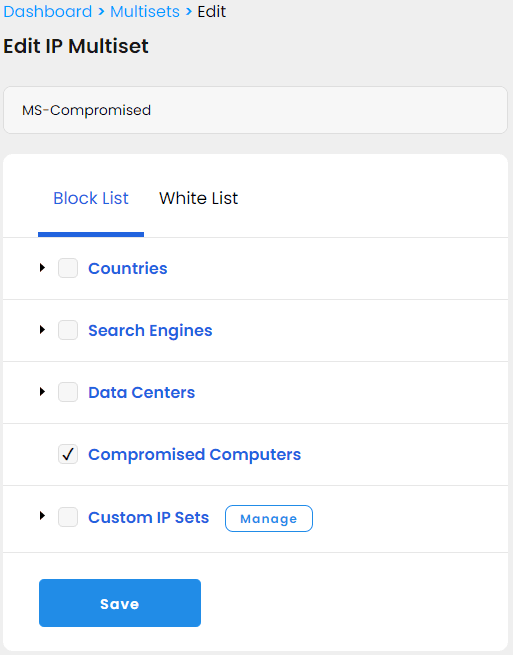

The first is permanent and runs constantly, and its multiset blocks a superset of compromised computers and datacenters, the IP sets provided and maintained by IPBlock.

After enabling this rule, we noticed an immediate drop in bad traffic by as much as 80%. At the same time, there were no complaints from users who could not access our service. We have not had any DDoS attacks since we enabled this rule, but we collected IPs from the previous attacks and tested a random set of them against our blocking multiset using the IPBlock test feature. The test showed that the new rule would mitigate over 80% of the attack, so we are optimistic that our infrastructure will hold up well in the case of future attacks.

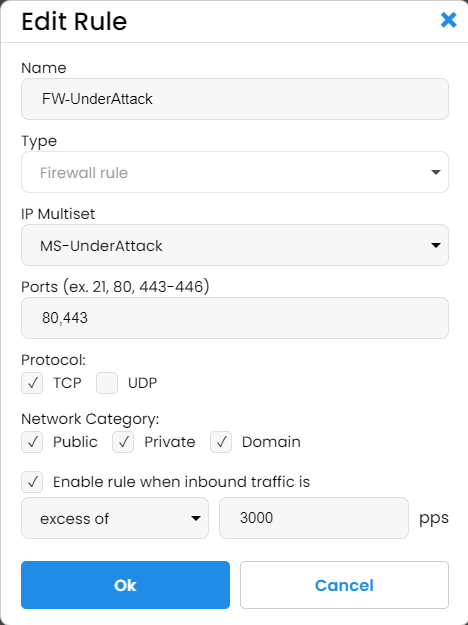

The second rule we created is traffic-conditional and precautionary.

We have not yet seen it activated, but we feel safer knowing it is there. Here is how we set it up. The IP multiset for this rule is much broader and, in addition to compromised computers and datacenters, also blocks all the countries that are not critical for our revenues and second-tier search engines. The rule is set to activate when incoming requests (packets per second) are 5 times greater than our normal pic levels. In other words, it is set to activate in case of an attack.

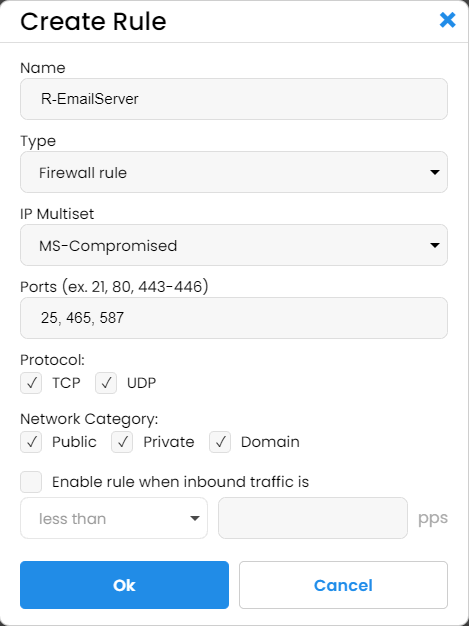

Email server

Email servers so far have not been a big source of concern for us, but we decided to try IPBlock on SMTP, IMAP, and POP3 ports anyway by blocking the IP list of compromised computers.

Immediately, we saw in the logs a significant drop in malicious traffic and even SPAM. (Good riddance!) As a result, this rule is here to stay.

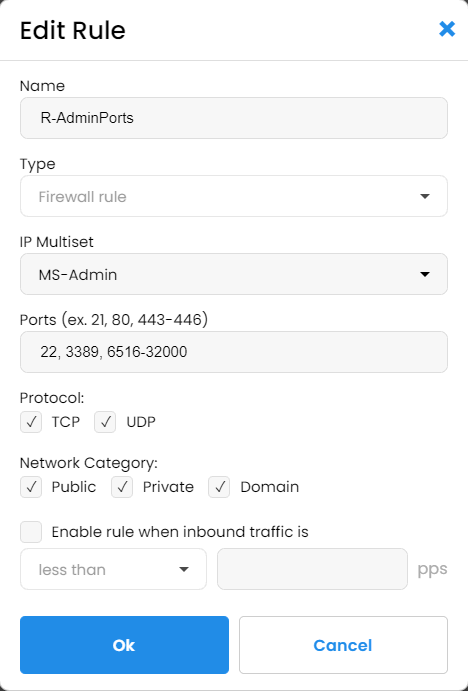

Internal API and admin ports

We have decided to go overboard and block everything that’s not us for our internal ports. We have not found a built-in way in IPBlock to block everything, so we created our own custom IP set that we called “Everything” with the IP range 0.0.0.0-255.255.255.255. We included this set as the blocking set and, as usual, whitelisted our own IPs. The resulting multiset blocks everyone who is not in our list, and we enabled this multiset on all our externally facing servers for admin ports. Afterward, it was a pleasure to re-examine our security logs and see no more failed login attempts from dictionary attacks.

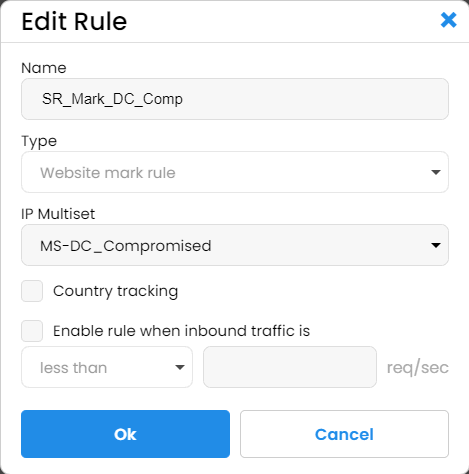

Ad quality problem

For this issue, we reused our compromised plus datacenter IP multiset with what IPBlock calls "website marking rule":

Then for this rule we enabled tracking:

![]()

And, after we added this rule to the server for our main website, we got an ISAPI filter that, for all matching requests, adds a special IPBlock request header. The rest was trivial, just a simple check in the website code for the value of the "Local-IPBlock-Tracking" header. If it equals "CC_DC", replace the usual real-user ad code with some plain house banners. The result? No more ad requests to our ad partners from robotic traffic. We also saw no measurable drop in ad revenues immediately after enabling this code, which indicates that we have not filtered out any valid traffic. Plus, our ad partners report no more issues from our site.

Conclusion

We were able to solve our main problems in a way that:

- Keeps us in full control of the rules

- Gives us a clear understanding of what is being done

- Allows us to manage multiple servers from a single place

- Provides us with historic reports and real-time monitoring

Update, Dec 2022

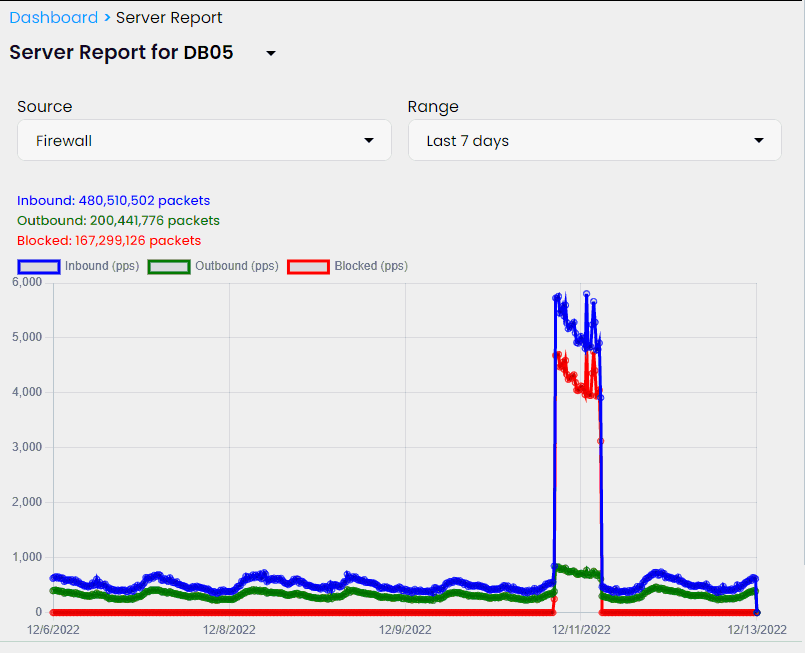

Two days ago we observed the first attack on our website with IPBlock installed. Thankfully, this attack wasn't massive but we could still see an IPBlock response and estimate its effectiveness in case of possible bigger attacks.

According to IPBlock charts, the incoming request frequency jumped roughly 8-9 times our normal levels with our server responses only doubled. That indicates that the majority of malicious traffic was handled by IPBlock and did not create any significant extra load for our site.